Existential Risk?

From AIs to politics, people are using the specter of existential risk to justify extreme measures and absolute levels of centralized control. Let's dig into this.

The big problem with politics in the current globalized, networked environment is that getting people to agree on anything positive is seemingly impossible.

However, getting people to agree and mobilize in response to a common threat is possible.

We see this dynamic everywhere, from anti-racism to anti-fascism to anti-trans to anti-anti-trans, and from protests to elections to war (Russia/China).

Worse, since networked political power is a function of identifying and opposing a threat, it’s led to the rampant intensification of threats to increase political power (see the Global Guerrillas Report: Gleichschaltung for more).

Amplification of Threats

This intensification process is where the phrase “existential risk, threat, or struggle” comes into play. Objectively, it’s a term that should only be used to describe something that could destroy humanity, civilization, etc. Based on this definition, it’s a label that should be used sparingly, mainly since it can be used to justify extreme actions to rectify it.

Earlier successful misuse of the term provides some examples of what can go wrong. For example:

terrorism (2001),

financial collapse (2008), and

the pandemic (2020).

In each previous case, existential hype justified actions that have proved far more harmful over the long term than the danger posed by the threat itself — from the invasion of Iraq (WMDs) to domestic counter-terrorism measures (think Patriot Act) to the decision not to prosecute bankers for the frauds that led to the financial crisis to statewide lockdowns and school closures.

Existential Political Threats

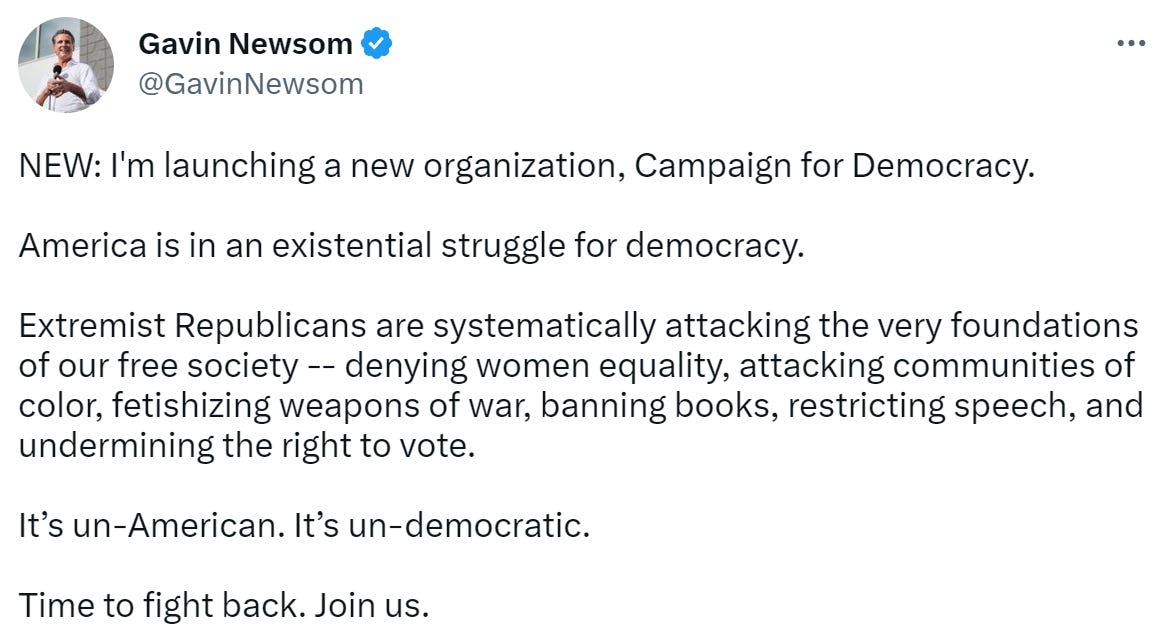

Since 2020, the term existential threat has been used to describe the political opposition (both sides use it, although it is far more prevalent on the left). Here’s a recent example from Governor Gavin Newsom’s soft launch of his presidential campaign.

The existential threat framework offers valuable insight into recent political developments. Let’s dig into this.

The characterization of Trump and his supporters as existential threats during the 2020 presidential campaign made it possible to anticipate the justification of the following developments (this is from the October 2020 Global Guerrillas Report: DeTrumpification):

The government (at every level) would wage lawfare against Trump and his associates — from criminal prosecution to hearings.

Corporations would be called upon to disconnect Trump from social media and impose algorithmic censorship to ensure social stability and ‘positive’ election outcomes.

The government would change the political dynamic and wage a counter-insurgency effort to suppress Trump supporters (particularly if an extreme event from the right could be used to justify it).

We’ve seen all of this happen.

Lawfare. Trump’s impending arrest on criminal charges and the Congressional Jan 6th hearings.

Corporations would disconnect Trump and his supporters from social media and implement a sweeping censorship regime (Twitter files). Musk's unexpected acquisition of Twitter has reversed much of this, but Congress is working on ways to change that reversal.

Change the dynamic. The characterization of Trump as an existential threat was expanded (using Trump’s ‘collusion’ with Russia) to include Putin (and now Xi). We are now at war with Russia (and soon China). This frame's expansion transforms Trump and his supporters into a disloyal faction allied with foreign enemies and all that entails legally.

Evidently, the ongoing and potentially intensified (Gavin’s signaling on this) characterization of the political opposition as existential threats will lead us toward extreme authoritarianism. If the sweeping overreach of the proposed Restrict Act is an indicator, we’re already there.

Existential AI Risk

About a decade ago, the author Nick Bostrom and the “Centre for the study of Existential Risk” at Cambridge started an esoteric public discussion on the existential risk posed by AI. Now, with the advent of GPT-3 and 4, the threat posed by AI has become exploitable.

Recently, thousands of prominent scientists, tech entrepreneurs, and philosophers have signed an open letter to make the following demand:

“we call on all AI labs to immediately pause for at least 6 months the training of AI systems more powerful than GPT-4”

The claim in the letter is that “Advanced AI could represent a profound change in the history of life on Earth” and inflict the following ‘existential’ harms:

Disinformation. “Should we let machines flood our information channels with propaganda and untruth?”

Job destruction. “Should we automate away all the jobs, including the fulfilling ones?”

AIs take over (and kill us). “Should we develop nonhuman minds that might eventually outnumber, outsmart, obsolete, and replace us? Should we risk the loss of control of our civilization?”

Unsurprisingly, given our experiences with the existential threat posed by terrorism and COVID, the signatories are demanding new control and governance systems, new tracking systems (leakage and computational resources), copyright and disinformation barriers, and new government programs/institutions. While it’s unlikely that this letter will result in a pause in development, the invocation of an existential AI threat will likely be used by those who control these systems to justify absolute control of these systems (“only we can keep you safe”).

Unfortunately, this existential threat hype is as unwarranted as the previous examples. Here’s why:

Disinformation. AI disinformation capabilities are only very dangerous if corporations and governments with access to critical systems and unlimited computing resources (who else can insert disinformation into billions of conversations between individuals and AIs?). Outsiders don’t have that access.

Jobs. Anticipating the future is hard, and it’s impossible without factual evidence that the trend you are expecting is happening. Conclusion: wait until the evidence is available. Regardless, if there are adverse wealth effects at the societal level from AIs, it’s FAR more likely to occur if only a few big AIs are controlled by a small number of people.

AIs take over. This threat is based on the assumption that the AIs we are developing are on the road to artificial general intelligence — an intelligence comparable to a human mind. What are they doing? They simulate intelligence based on collected data, which makes them handy tools, not threats.

If we really wanted to protect ourselves against the harmful effects of AIs, both now and in the future, and maximize the beneficial impact of these AIs, the best way to do that would be to:

Get control of the data that AIs use for training.

How? Everyone contributing data to train a closed AI must explicitly consent (this is the path to personal data ownership and long-term prosperity).

To regain control of the AIs already built (ChatGPT-4 etc.), we need a legislative clawback that demands all AIs trained with data from the open Internet without specific consent must be released as open-source code.

Optimally, if most big AIs adopt an open-source path of development due to this reform:

It would be easier to spot problems/dangers and prevent authoritarian use.

It would broaden and accelerate the prosperity delivered by this technology.

Rates of innovation would soar as millions of users found unexpected uses for it.

Sincerely,

John Robb

If you’re vested in the system everything IS an existential risk.

Hyperextended, Hyper-complex, built and changed by those long dead, equality eliminates competence, running out of people who understand and can even maintain the system.

Democracy has gone deep into the buy votes for the never ending elections and run out of tricks to sustain it.

Mass education as jobs program created a vast pool of young talent into a swamp of aging mediocrity; mediocrity with heavy debts and expensive tastes, and they vote.

Its the end of their world, so to them it’s the end of their world.